AI-Generated vs Human-led Apps: Maximize Dev Success

The debate over whether AI will replace human developers is reshaping how founders allocate budgets, hire teams, and plan product roadmaps. Many in the tech sector assume AI tools will soon make engineers obsolete, but the reality is far more nuanced. Hybrid workflows outperform both pure AI and pure human approaches, with each side contributing distinct strengths. This article breaks down the evidence, clarifies the real tradeoffs, and gives you a practical framework for choosing the right development strategy for your startup.

Key Takeaways

Point | Details |

|---|---|

Hybrid wins | Mixing AI and human expertise leads to faster, higher-quality app development than using either alone. |

AI speeds up routine work | AI tools handle repetitive coding tasks quickly but stumble on complexity and judgment. |

Humans ensure quality | Senior developers are crucial for architecture, edge cases, and long-term maintainability. |

Context matters | Choose your approach based on your app's complexity, team experience, and project phase for the best results. |

What are AI-generated apps and human-led development?

Before comparing outcomes, it helps to define what we're actually talking about. These three models represent very different ways of building software, and confusing them leads to poor decisions.

AI-generated apps are built primarily using AI coding tools that write code, handle boilerplate, scaffold UI components, and automate repetitive workflows. Tools like GitHub Copilot, Cursor, or GPT-4-based code assistants fall into this category. You can explore how AI-native app workflows are reshaping startup development in 2026.

Human-led development relies on experienced engineers driving every stage: architecture decisions, problem-solving, code quality, and system design. Judgment and context are central to this model.

Hybrid workflows combine both. AI handles speed and automation while humans provide oversight, architectural thinking, and quality control. Our hybrid development guide covers how startups are structuring these teams effectively.

The core difference comes down to how each approach processes problems. AI uses statistical prediction (next-token patterns), while humans apply explicit rules and judgment. That distinction matters enormously when your app hits edge cases or novel requirements.

Approach | Speed | Quality control | Best for |

|---|---|---|---|

AI-generated | ✔ Very fast | ⚠ Needs review | MVPs, boilerplate, prototypes |

Human-led | Moderate | ✔ High | Complex systems, novel domains |

Hybrid | ✔ Fast | ✔ Strong | Scaling startups, production apps |

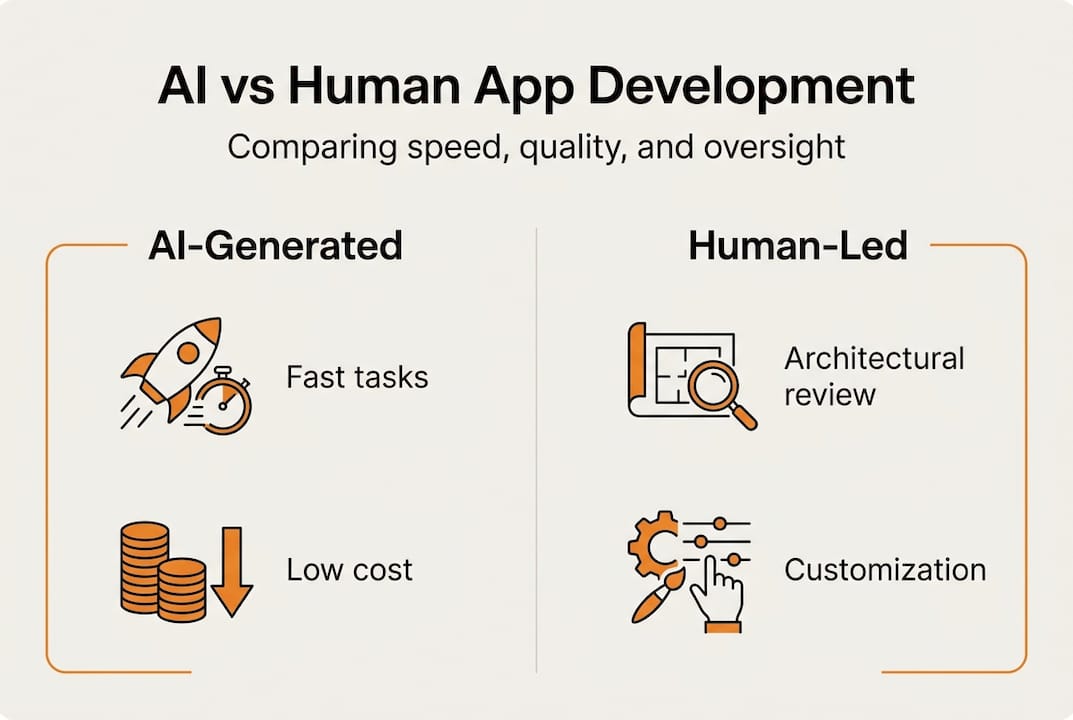

Key strengths and weaknesses at a glance:

🚀 AI: Rapid code generation, low cost for repetitive tasks, accessible to junior developers

📉 AI: Hallucinations, missing architectural context, poor judgment on ambiguous requirements

✔ Human: Deep problem-solving, system-wide consistency, innovation

📉 Human: Slower for routine work, higher cost per feature

📊 Hybrid: Best of both, but requires strong review processes

Key productivity findings: How AI, humans, and hybrids perform

With the definitions clear, let's look at what the data actually shows. Founders need numbers, not opinions.

Hybrid teams outperform both pure AI and pure human setups. AI is 2 to 3 times faster on structured, mechanical tasks like CRUD operations, basic APIs, and authentication flows. Humans remain superior for complex architecture, ambiguous requirements, and debugging unfamiliar systems.

📊 Statistic callout: GitHub Copilot increased tasks completed by 26% in controlled studies, with real-world gains ranging from 12% to 25% depending on developer experience. Junior developers saw the largest productivity boosts.

Metric | AI-only | Human-only | Hybrid |

|---|---|---|---|

Speed (routine tasks) | 2-3x faster | Baseline | 1.5-2x faster |

Code quality | Variable | High | High with review |

Debugging complex bugs | Weak | Strong | Strong |

Junior dev productivity | High boost | Moderate | Highest overall |

Architectural decisions | Poor | Excellent | Excellent |

"The productivity gains from AI tools are real, but they are not uniform. Context, oversight, and team experience determine whether those gains translate into shipping better products faster."

Pro Tip: If your team includes mostly junior developers, AI tools can close skill gaps quickly. But pair every AI-assisted sprint with at least one senior review cycle to catch structural issues before they compound.

For a broader view of how AI is reshaping app revenue outcomes and which industry-specific advantages matter most, those resources are worth reviewing alongside this data.

Strengths and weaknesses: Where AI-generated and human-led excel (and fail)

The evidence points toward hybrids, but neither approach is risk-free. Here's where each shines or stumbles in real projects.

Where AI-generated code excels:

Generating repetitive templates and boilerplate in minutes

Scaffolding UI components and standard API integrations

Accelerating onboarding for new features with clear specifications

Where AI-generated code fails:

AI lacks architectural memory, drifts in large systems, and fails at causal reasoning

Hallucinating plausible-looking but incorrect solutions

Missing security vulnerabilities or edge cases in complex flows

Where human-led development excels:

Solving ambiguous, novel, or high-stakes problems

Maintaining system-wide consistency across a growing codebase

Mentoring junior engineers and preserving institutional knowledge

Where human-led development fails:

Slow and expensive for routine, well-defined tasks

Bottlenecks when senior engineers are stretched thin

Hybrid workflows introduce their own challenge. AI productivity gains can be offset by the debugging and review burden placed on senior engineers, plus a motivation drop when developers feel reduced to code reviewers rather than builders. This is sometimes called the "productivity tax."

"The broken apprenticeship cycle is a real risk. When junior developers rely on AI to generate code they don't fully understand, they miss the learning that builds senior-level judgment over time."

Pro Tip: Always pair AI coding tools with senior architecture reviews. This is especially critical for app security, where AI-generated code can introduce subtle vulnerabilities that only experienced engineers catch.

Real-world examples: How startups use AI, humans, and hybrid teams

To see these dynamics in action, consider how real startups have implemented these approaches for very different outcomes.

AI-centric MVP validation: 25% of YC startups in recent cohorts report using AI for 95% of their initial code. The upside is speed and cost savings during prototype and validation phases. The downside appears later: tech debt accumulates, scaling becomes painful, and the absence of senior architectural decisions creates rework.

Human-led teams adding AI: Several startups began with fully human-led teams, then introduced AI tools to accelerate routine work. These teams reported fewer launch setbacks and higher code quality, but slower initial iteration cycles. Adding AI to handle boilerplate freed senior engineers to focus on product differentiation.

Hybrid from day one: Startups that structured hybrid teams early, with AI handling scaffolding and humans owning architecture and QA, achieved the best balance of speed and quality. They iterated rapidly during early product discovery while minimizing the tech debt that slows scaling.

"Startups die from no product-market fit far more often than from bad code. But bad code will kill you once you find that fit and try to scale."

You can review AI-native app case studies for more examples, or connect with expert development advisors to map these patterns to your specific product context.

How to choose the right approach for your startup

With real cases explored, here's a practical guide for choosing and shifting your development strategy based on where your startup actually is.

Evaluate your app's complexity:

📌 Mechanical, well-defined MVPs: AI tools are appropriate and cost-effective

📌 Complex domains, novel logic, or regulated industries: Human or hybrid teams are necessary

📌 Hybrid oversight is essential when requirements are ambiguous or evolving rapidly

Assess your team's experience level:

Junior-heavy teams benefit most from AI productivity tools but need senior oversight

Senior engineers should own architecture regardless of how much AI assists

Match your budget and timeline:

AI accelerates repetitive tasks and reduces early-stage costs

Hybrid teams control tech debt and reduce costly rewrites later

Pure human teams offer the highest quality but the slowest initial pace

Consider your organizational maturity:

Early-stage startups can go lean with AI for initial validation, then transition to hybrid as product complexity grows

Growth-stage companies should prioritize human oversight to protect product-market fit gains

Quick decision checklist:

✔ Map every major feature to its complexity level

✔ Identify which features carry the highest risk if they fail

✔ Assign AI tools to low-risk, high-repetition tasks

✔ Reserve human judgment for architecture, security, and user experience decisions

Best practices for integrating AI tools and human oversight

After deciding on a strategy, apply these process improvements for the best results with hybrid or AI-enhanced teams.

Use AI for scaffolding and templates. Let AI tools generate CRUD code, UI scaffolding, and standard integrations. This is where hybrid workflows reduce repetitive time and enable faster iteration without sacrificing quality.

Enforce human architectural review. Every integration point, security boundary, and data model decision should pass through a senior engineer. Review modern UI practices to ensure AI-generated components meet current design standards.

Run integrated QA and testing cycles. Don't treat AI-generated code as tested code. Build automated test coverage and manual review into every sprint. Strong user experience strategies depend on catching UX regressions early.

Invest in code reviews and pair programming. This directly offsets hallucination and context drift from AI tools. It also preserves the mentorship pipeline that builds your next generation of senior engineers.

Monitor AI accuracy continuously. AI tools evolve quickly, and their failure modes shift. Staying current on AI accuracy best practices helps you calibrate how much to trust generated outputs at any given time.

Pro Tip: Assign clear ownership. AI owns speed. Humans own quality. When those roles blur, both suffer. Define in your sprint process exactly which tasks go to AI tools and which require human authorship from the start.

Pitfalls to avoid:

📉 Underestimating the review cost of large volumes of AI-generated code

📉 Skipping onboarding documentation because AI "can explain the code"

📉 Letting junior engineers ship AI-generated code without senior sign-off

Accelerate your app's success with expert guidance

Choosing between AI-generated, human-led, or hybrid development is one of the most consequential decisions you'll make as a founder. Getting it wrong costs time, money, and momentum.

At TouchZen Media, we work with startups across tech sectors to design and build apps that balance AI speed with human-quality oversight. Whether you need a lean MVP or a production-ready platform, our team of expert app developers brings both strategic clarity and hands-on execution. We're recognized among top UX design leaders and leading mobile agencies for a reason: we treat your product like it's our own. Let's build something that scales.

Frequently asked questions

Is it cheaper to use AI-generated apps than hiring developers?

AI-generated apps often reduce initial costs for repetitive tasks, but still need human oversight for complex requirements, which adds review and debugging costs that founders frequently underestimate.

Do AI coding tools replace the need for senior engineers?

No. AI tools assist with speed and repetition, but AI lacks persistent architectural memory, making senior engineers essential for system design, judgment calls, and debugging at scale.

What's the best team structure for a startup building apps with AI?

A hybrid team is currently the most effective setup. Hybrid workflows outperform both pure AI and pure human approaches by combining automation speed with human quality control.

Are there risks to using AI for all my app code?

Yes. AI drifts in large systems and fails at causal reasoning and novel edge cases, meaning fully AI-generated codebases often require significant human debugging before they're production-ready.